The integration of generative AI agents into business processes is poised to accelerate as organizations recognize the untapped potential of these technologies. Advancements in multimodal artificial intelligence (AI), where agents can understand and generate not just text but also images, audio, and video, will further broaden their applications. This post will discuss agentic AI driven architecture and ways of implementing.

The emergence of generative AI agents in recent years has contributed to the transformation of the AI landscape, driven by advances in large language models (LLMs) and natural language processing (NLP). Companies like Anthropic, Cohere, and Amazon have made significant strides in developing powerful language models capable of understanding and generating human-like content across multiple modalities, revolutionizing how businesses integrate and utilize artificial intelligence in their processes.

These AI agents have demonstrated remarkable versatility, being able to perform tasks ranging from creative writing and code generation to data analysis and decision support. Their ability to engage in intelligent conversations, provide context-aware responses, and adapt to diverse domains has revolutionized how businesses approach problem-solving, customer service, and knowledge dissemination.

One of the most significant impacts of generative AI agents has been their potential to augment human capabilities through both synchronous and asynchronous patterns. In synchronous orchestration, just like in traditional process automation, a supervisor agent orchestrates the multi-agent collaboration, maintaining a high-level view of the entire process while actively directing the flow of information and tasks. This approach allows businesses to offload repetitive and time-consuming tasks in a controlled, predictable manner.

Alternatively, asynchronous choreography follows an event-driven pattern where agents operate autonomously, triggered by events or state changes in the system. In this model, agents publish events or messages that other agents can subscribe to, creating a workflow that emerges from their collective behavior. These patterns have proven particularly valuable in enhancing customer experiences, where agents can provide round-the-clock support, resolve issues promptly, and deliver personalized recommendations through either orchestrated or event-driven interactions, leading to increased customer satisfaction and loyalty.

Agentic AI architecture

Agentic AI architecture is a shift in process automation through autonomous agents towards the capabilities of AI, with the purpose of imitating cognitive abilities and enhancing the actions of traditional autonomous agents. This architecture can enable businesses to streamline operations, enhance decision-making processes, and automate complex tasks in new ways.

Much like traditional business process automation through technology, the agentic AI architecture is the design of AI systems designed to resolve complex problems with limited or indirect human intervention. These systems are composed of multiple AI agents that converse with each other or execute complex tasks through a series of choreographed or orchestrated processes. This approach empowers AI systems to exhibit goal-directed behavior, learn from experience, and adapt to changing environments.

The difference between a single agent invocation and a multi-agent collaboration lies in the complexity and the number of agents involved in the process.

When you interact with a digital assistant like Alexa, you’re typically engaging with a single agent, also known as a conversational agent. This agent processes your request, such as setting a timer or checking the weather, and provides a response without needing to consult other agents.

Now, imagine expanding this interaction to include multiple agents working together. Let’s start with a simple travel booking scenario:

Your interaction begins with telling a travel planning agent about your desired trip. In this first step, the AI model, in this case an LLM, is acting as an interpreter and user experience interface between your natural language input and the structured information needed by the travel planning system. It’s processing your request, which might be a complex statement like “I want to plan a week-long beach vacation in Hawaii for my family of four next month,” and extracting key details such as the destination, duration, number of travelers, and approximate dates.

The LLM is also likely to infer additional relevant information that wasn’t explicitly stated, such as the need for family-friendly accommodations or activities. It might ask follow-up questions to clarify ambiguous points or gather more specific preferences. Essentially, the LLM is transforming your casual, conversational input into a structured set of travel requirements that can be used by the specialized booking agents in the subsequent steps of the workflow.

This initial interaction sets the foundation for the entire multi-agent workflow, making sure that the travel planning agent has a clear understanding of your needs before engaging other specialized agents.

By adding another agent, the flight booking agent, the travel planning agent can call upon it to find suitable flights. The travel planning agent needs to provide the flight booking agent with relevant information (dates, destinations), and wait for and process the flight booking agent’s response, to incorporate the flight options into its overall plan

Now, let’s add another agent to the workflow; a hotel booking agent to support finding accommodations. With this addition, the travel planning agent must also communicate with the hotel booking agent, which needs to make sure that the hotel dates align with the flight dates and provide the information back to the overall plan to include both flight and hotel options.

As we continue to add agents, such as a car rental agent or a local activities agent, each new addition receives relevant information from the travel planning agent, performs its specific task, and returns its results to be incorporated into the overall plan. The travel planning agent acts not only as the user experience interface, but also as a coordinator, deciding when to involve each specialized agent and how to combine their inputs into a cohesive travel plan.

This multi-agent workflow allows for more complex tasks to be accomplished by taking advantage of the specific capabilities of each agent. The system remains flexible, because agents can be added or removed based on the specific needs of each request, without requiring significant changes to the existing agents and minimal change to the overall workflow.

For more on the benefits of breaking tasks into agents, see How task decomposition and smaller LLMs can make AI more affordable.

Process automation with agentic AI architecture

The preceding scenario, just like in traditional process automation, is a common orchestration pattern, where the multi-agent collaboration is orchestrated by a supervisor agent. The supervisor agent acts like a conductor leading an orchestra, telling each instrument when to play and how to harmonize with others. For this approach, Amazon Bedrock Agents enables generative AI applications to execute multi-step tasks orchestrated by an agent and create a multi-agent collaboration with Amazon Bedrock Agents to solve complex tasks. This is done by designating an Amazon Bedrock agent as a supervisor agent, associating one or more collaborator agents with the supervisor. For more details, read on creating and configuring Amazon Bedrock Agents and Use multi-agent collaboration with Amazon Bedrock Agents.

The following diagram illustrates the supervisor agent methodology.

Supervisor agent methodology

Following traditional process automation patterns, the other end of the spectrum to synchronous orchestration would be asynchronous choreography: an asynchronous event-driven multi-agent workflow. In this approach, there would be no central orchestrating agent (supervisor). Agents operate autonomously where actions are triggered by events or changes in a system’s state and agents publish events or messages that other agents can subscribe to. In this approach, the workflow emerges from the collective behavior of the agents reacting to events asynchronously. It’s more like a jazz improvisation, where each musician responds to what others are playing without a conductor. The following diagram illustrates this event-driven workflow.

Event-driven workflow methodology

The event-driven pattern in asynchronous systems operates without predefined workflows, creating a dynamic and potentially chaotic processing environment. While agents subscribe to and publish messages through a central event hub, the flow of processing is determined organically by the message requirements and the available subscribed agents. Although the resulting pattern may resemble a structured workflow when visualized, it’s important to understand that this is emergent behavior rather than orchestrated design. The absence of centralized workflow definitions means that message processing occurs naturally based on publication timing and agent availability, creating a fluid and adaptable system that can evolve with changing requirements.

The choice between synchronous orchestration and asynchronous event-driven patterns fundamentally shapes how agentic AI systems operate and scale. Synchronous orchestration, with its supervisor agent approach, provides precise control and predictability, making it ideal for complex processes requiring strict oversight and sequential execution. This pattern excels in scenarios where the workflow needs to be tightly managed, audited, and debugged. However, it can create bottlenecks as all operations must pass through the supervisor agent. Conversely, asynchronous event-driven systems offer greater flexibility and scalability through their distributed nature. By allowing agents to operate independently and react to events in real-time, these systems can handle dynamic scenarios and adapt to changing requirements more readily. While this approach may introduce more complexity in tracking and debugging workflows, it excels in scenarios requiring high scalability, fault tolerance, and adaptive behavior. The decision between these patterns often depends on the specific requirements of the system, balancing the need for control and predictability against the benefits of flexibility and scalability.

Getting the best of both patterns

You can use a single agent to route messages to other agents based on the context of the event data (message) at runtime, with no prior knowledge of the downstream agents, without having to rely on each agent subscribing to an event hub. This is traditionally known as the message broker or event broker pattern, which for the purpose of this article we will call an agent broker pattern, to represent brokering of messages to AI agents. The agent broker pattern is a hybrid approach that combines elements of both centralized synchronous orchestration and distributed asynchronous event-driven systems.

The key to this pattern is that a single agent acts as a central hub for message distribution but doesn’t control the entire workflow. The broker agent determines where to send each message based on its content or metadata, making routing decisions at runtime. The processing agents are decoupled from each other and from the message source, only interacting with the broker to receive messages. The agent broker pattern is different from the supervisor pattern because it awaits a response from collaborating agents by routing a message to an agent and not awaiting a response. The following diagram illustrates the agent broker methodology.

Agent broker methodology

Following an agent broker pattern, the system is still fundamentally event-driven, with actions triggered by the arrival of messages. New agents can be added to handle specific types of messages without changing the overall system architecture. Understanding how to implement this type of pattern will be explained later in this post.

This pattern is often used in enterprise messaging systems, microservices architectures, and complex event processing systems. It provides a balance between the structure of orchestrated workflows and the flexibility of pure event-driven systems.

Agentic architecture with the Amazon Bedrock Converse API

Traditionally, we might have had to sacrifice some flexibility in the broker pattern by having to update the routing logic in the broker when adding additional processes (agents) to the architecture. This is, however, not the case when using the Amazon Bedrock Converse API. With the Converse API, we can call a tool to complete an Amazon Bedrock model response. The only change is the additional agent added to the collaboration stored as configuration outside of the broker.

To let a model use a tool to complete a response for a message, the message and the definitions for one or more tools (agents) are sent to the model. If the model determines that one of the tools can help generate a response, it returns a request to use the tool.

AWS AppConfig, a capability of AWS Systems Manager, is used to store each of the agents’ tool context data as a single configuration in a managed data store, to be sent to the Converse API tool request. By using AWS Lambda as the message broker to receive all message and send requests to the Converse API with the tool context stored in AWS AppConfig, the architecture allows for adding additional agents to the system without having to update the routing logic, by ‘registering’ agents as ‘tool context’ in the configuration stored in AWS AppConfig, to be read by Lambda at run time (event message received). For more information about when to use AWS Config, see AWS AppConfig use cases.

Implementing the agent broker pattern

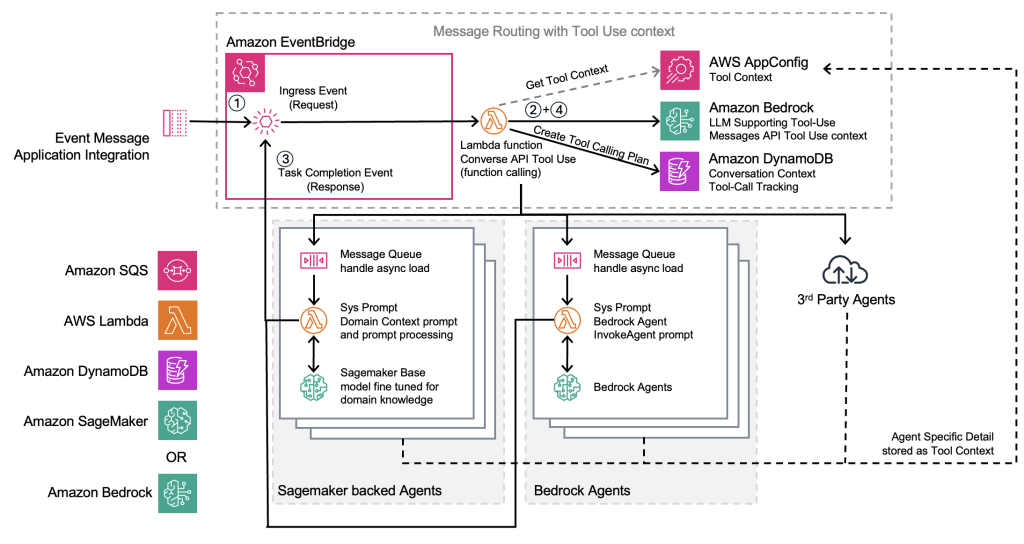

The following diagram demonstrates how Amazon EventBridge and Lambda act as a central message broker, with the Amazon Bedrock Converse API to let a model use a tool in a conversation to dynamically route messages to appropriate AI agents.

Agent broker architecture

Messages sent to EventBridge are routed through an EventBridge rule to Lambda. There are three tasks the EventBridge Lambda function performs as the agent broker:

- Query AWS AppConfig for all agents’ tool context. An agent tool context is a description of the agent’s capability along with the Amazon Resource Name (ARN) or URL of the agent’s message ingress.

- Provide the agent tool context along with the inbound event message to the Amazon Bedrock LLM through the Converse API; in this example, using an Amazon Bedrock tools-compatible LLM. The LLM, using the Converse API, combines the event message context compared to the agent tool context to provide a response back to the requesting Lambda function, containing the recommended tool or tools that should be used to process the message.

- Receive the response from the Converse API request containing one or more tools that should be called to process the event message, and hands the event message to the ingress of the recommended tools.

In this example, the architecture demonstrates brokering messages asynchronously to an Amazon SageMaker based agent, an Amazon Bedrock agent, and an external third-party agent, all from the same agent broker.

Although the brokering Lambda function could connect directly to the SageMaker or Amazon Bedrock agent API, the architecture provides for adaptability and scalability in message throughput, allowing messages from the agent broker to be queued, in this example with Amazon Simple Queue Service (Amazon SQS), and processed according to the capability of the receiving agent. For adaptability, the Lambda function subscribed to the agent ingress queue provides additional system prompts (pre-prompting of the LLM for specific tool context) and message formatted, and required functions for the expected input and output of the agent request.

To add new agents to the system, the only integration requirements are to update the AWS AppConfig with the new agent tool context (description of the agents’ capability and ingress endpoint), and making sure the brokering Lambda function has permissions to write to the agent ingress endpoint.

Agents can be added to the system without rewriting the Lambda function or integration that requires downtime, allowing the new agent to be used on the next instantiation of the brokering Lambda function.

Implementing the supervisor pattern with an agent broker

Building upon the agent broker pattern, the architecture can be extended to handle more complex, stateful interactions. Although the broker pattern effectively uses AWS AppConfig and Amazon Bedrock’s Converse API tool use capability for dynamic routing, its unidirectional nature has limitations. Events flow in and are distributed to agents, but complex scenarios like travel booking require maintaining context across multiple agent interactions. This is where the supervisor pattern provides additional capabilities without compromising the flexible routing we achieved with the broker pattern.

Using the example of the travel booking agent: the example has the broker agent and several task-based agents that events will be pushed to. When processing a request like “Book a 3-night trip to Sydney from Melbourne during the first week of September for 2 people”, we encounter several challenges. Although this statement contains clear intent, it lacks critical details that the agent might need, such as:

- Specific travel dates

- Accommodation preferences and room configurations

The broker pattern alone can’t effectively manage these information gaps while maintaining context between agent interactions. This is where adding the capability of a supervisor to the broker agent provides:

- Contextual awareness between events and agent invocations

- Bi-directional information flow capabilities

The following diagram illustrates the supervisor pattern workflow

Supervisor pattern architecture

When a new event enters the system, the workflow initiates the following steps:

- The event is assigned a unique identifier for tracking

- The supervisor performs the following actions:

- Evaluates which agents to invoke (brokering)

- Creates a new state record with the identifier and timestamp

- Provides this contextual information to the selected agents along with their invocation parameters

- Agents process their tasks and emit ‘task completion’ events back to EventBridge

- The supervisor performs the following actions:

- Collects and processes completed events

- Evaluates the combined results and context

- Determines if additional agent invocations are needed

- Continues this cycle until all necessary actions are completed

This pattern handles scenarios where agents might return varying results or request additional information. The supervisor can either:

- Derive missing information from other agent responses

- Request additional information from the source

- Coordinate with other agents to resolve information gaps

To handle information gaps without architectural modifications, we can introduce an answers agent to the existing system. This agent operates within the same framework as other agents, but specializes in context resolution. When agents report incomplete information or require clarification, the answers agent can:

- Process queries about missing information

- Emit task completion events with enhanced context

- Allow the supervisor to resume workflow execution with newly available information, the same way that it would after another agent emits its task-completion event.

This enhancement enables complex, multi-step workflows while maintaining the system’s scalability and flexibility. The supervisor can manage dependencies between agents, handle partial completions, and make sure that the necessary information is gathered before finalizing tasks.

Implementation considerations:

Implementing the supervisor pattern on top of the existing broker agent architecture provides the advantages of both the broker pattern and the complex state management of orchestration. The state management can be handled through Amazon DynamoDB, and maintaining the use of EventBridge for event routing and AWS AppConfig for agent configuration. The Amazon Bedrock Converse API continues to play a crucial role in agent selection, but now with added context from the supervisor’s state management. This allows you to preserve the dynamic routing capabilities we established with the broker pattern while adding the sophisticated workflow management needed for complex, multi-step processes.

Conclusion

Agentic AI architecture, powered by Amazon Bedrock and AWS services, represents a leap forward in the evolution of automated AI systems. By combining the flexibility of event-driven systems with the power of generative AI, this architecture enables businesses to create more adaptive, scalable, and intelligent automated processes. The agent broker pattern offers a robust solution for dynamically routing complex tasks to specialized AI agents, and the agent supervisor pattern extends these capabilities to handle sophisticated, context-aware workflows.

These patterns take advantage of the strengths of the Amazon Bedrock’s Converse API, Lambda, EventBridge, and AWS AppConfig to create a flexible and extensible system. The broker pattern excels at dynamic routing and seamless agent integration, while the supervisor pattern adds crucial state management and contextual awareness for complex, multi-step processes. Together, they provide a comprehensive framework for building sophisticated AI systems that can handle both simple routing and complex, stateful interactions.

This architecture not only streamlines operations, but also opens new possibilities for innovation and efficiency across various industries. Whether implementing simple task routing or orchestrating complex workflows requiring maintained context, organizations can build scalable, maintainable AI systems that evolve with their needs while maintaining operational stability.

To get started with an agentic AI architecture, consider the following next steps:

- Explore Amazon Bedrock – If you haven’t already, sign up for Amazon Bedrock and experiment with its powerful generative AI models and APIs. Familiarize yourself with the Converse API and its tool use capabilities.

- Prototype your own agent broker – Use the architecture outlined in this post as a starting point to build a proof-of-concept agent broker system tailored to your organization’s needs. Start small with a few specialized agents and gradually expand.

- Identify use cases – Analyze your current business processes to identify areas where an agentic AI architecture could drive significant improvements. Consider complex, multi-step tasks that could benefit from AI assistance.

- Stay informed – Keep up with the latest developments in AI and cloud technologies. AWS regularly updates its offerings, so stay tuned for new features that could enhance your agentic AI systems.

- Collaborate and share – Join AI and cloud computing communities to share your experiences and learn from others. Consider contributing to open-source projects or writing about your implementation to help advance the field.

- Invest in training – Make sure your team has the necessary skills to work with these advanced AI technologies. Consider AWS training and certification programs to build expertise in your organization.

By embracing an agentic AI architecture, you’re not just optimizing your current processes – you’re positioning your organization at the forefront of the AI revolution. Start your journey today and unlock the full potential of AI-driven automation for your business.

About the Authors

Aaron Sempf is Next Gen Tech Lead for the AWS Partner Organization in Asia-Pacific and Japan. With over 20 years in distributed system engineering design and development, he focuses on solving for large scale complex integration and event driven systems. In his spare time, he can be found coding prototypes for autonomous robots, IoT devices, distributed solutions, and designing agentic architecture patterns for generative AI assisted business automation.

Aaron Sempf is Next Gen Tech Lead for the AWS Partner Organization in Asia-Pacific and Japan. With over 20 years in distributed system engineering design and development, he focuses on solving for large scale complex integration and event driven systems. In his spare time, he can be found coding prototypes for autonomous robots, IoT devices, distributed solutions, and designing agentic architecture patterns for generative AI assisted business automation.

Joshua Toth is a Senior Prototyping Engineer with over a decade of experience in software engineering and distributed systems. He specializes in solving complex business challenges through technical prototypes, demonstrating the art of the possible. With deep expertise in proof of concept development, he focuses on bridging the gap between emerging technologies and practical business applications. In his spare time, he can be found developing next-generation interactive demonstrations and exploring cutting-edge technological innovations.

Joshua Toth is a Senior Prototyping Engineer with over a decade of experience in software engineering and distributed systems. He specializes in solving complex business challenges through technical prototypes, demonstrating the art of the possible. With deep expertise in proof of concept development, he focuses on bridging the gap between emerging technologies and practical business applications. In his spare time, he can be found developing next-generation interactive demonstrations and exploring cutting-edge technological innovations.

Sara van de Moosdijk, simply known as Moose, is an AI/ML Specialist Solution Architect at AWS. She helps AWS customers and partners build and scale AI/ML solutions through technical enablement, support, and architectural guidance. Moose spends her free time figuring out how to fit more books in her overflowing bookcase.

Sara van de Moosdijk, simply known as Moose, is an AI/ML Specialist Solution Architect at AWS. She helps AWS customers and partners build and scale AI/ML solutions through technical enablement, support, and architectural guidance. Moose spends her free time figuring out how to fit more books in her overflowing bookcase.