Amazon SageMaker HyperPod is designed to support large-scale machine learning (ML) operations, providing a robust environment for training foundation models (FMs) over extended periods. Multiple users — such as ML researchers, software engineers, data scientists, and cluster administrators — can work concurrently on the same cluster, each managing their own jobs and files without interfering with others.

When using HyperPod, you can use familiar orchestration options such as Slurm or Amazon Elastic Kubernetes Service (Amazon EKS). This blog post specifically applies to HyperPod clusters using Slurm as the orchestrator. In these clusters, the concept of login nodes is available, which cluster administrators can add to facilitate user access. These login nodes serve as the entry point through which users interact with the cluster’s computational resources. By using login nodes, users can separate their interactive activities, such as browsing files, submitting jobs, and compiling code, from the cluster’s head node. This separation helps prevent any single user’s activities from affecting the overall performance of the cluster.

However, although HyperPod provides the capability to use login nodes, it doesn’t provide an integrated mechanism for load balancing user activity across these nodes. As a result, users manually select a login node, which can lead to imbalances where some nodes are overutilized while others remain underutilized. This not only affects the efficiency of resource usage but can also lead to uneven performance experiences for different users.

In this post, we explore a solution for implementing load balancing across login nodes in Slurm-based HyperPod clusters. By distributing user activity evenly across all available nodes, this approach provides more consistent performance, better resource utilization, and a smoother experience for all users. We guide you through the setup process, providing practical steps to achieve effective load balancing in your HyperPod clusters.

Solution overview

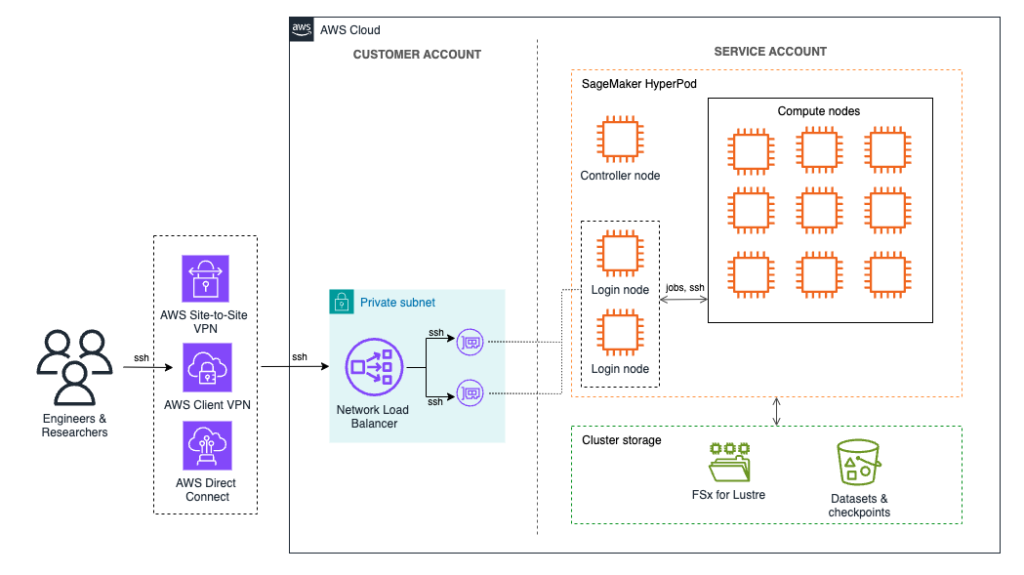

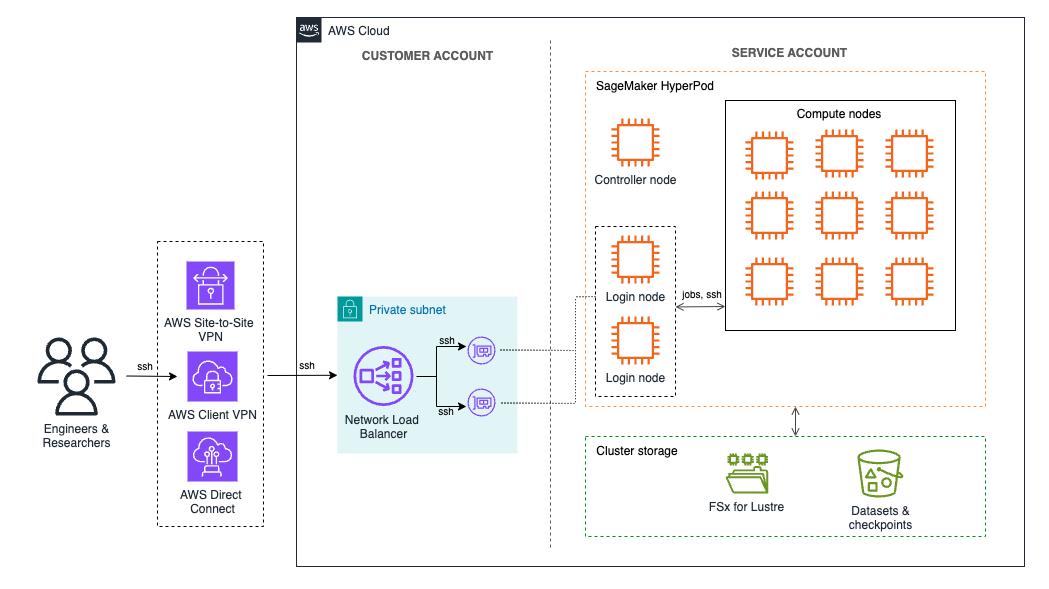

In HyperPod, login nodes serve as access points for users to interact with the cluster’s computational resources so they can manage their tasks without impacting the head node. Although the default method for accessing these login nodes is through AWS Systems Manager, there are cases where direct Secure Shell (SSH) access is more suitable. SSH provides a more traditional and flexible way of managing interactions, especially for users who require specific networking configurations or need features such as TCP load balancing, which Systems Manager doesn’t support.

Given that HyperPod is typically deployed in a virtual private cloud (VPC) using private subnets, direct SSH access to the login nodes requires secure network connectivity into the private subnet. There are several options to achieve this:

- AWS Site-to-Site VPN – Establishes a secure connection between your on-premises network and your VPC, suitable for enterprise environments

- AWS Direct Connect – Offers a dedicated network connection for high-throughput and low-latency needs

- AWS VPN Client – A software-based solution that remote users can use to securely connect to the VPC, providing flexible and easy access to the login nodes

This post demonstrates how to use the AWS VPN Client to establish a secure connection to the VPC. We set up a Network Load Balancer (NLB) within the private subnet to evenly distribute SSH traffic across the available login nodes and use the VPN connection to connect to the NLB in the VPC. The NLB ensures that user sessions are balanced across the nodes, preventing any single node from becoming a bottleneck and thereby improving overall performance and resource utilization.

For environments where VPN connectivity might not be feasible, an alternative option is to deploy the NLB in a public subnet to allow direct SSH access from the internet. In this configuration, the NLB can be secured by restricting access through a security group that allows SSH traffic only from specified, trusted IP addresses. As a result, authorized users can connect directly to the login nodes while maintaining some level of control over access to the cluster. However, this public-facing method is outside the scope of this post and isn’t recommended for production environments, as exposing SSH access to the internet can introduce additional security risks.

The following diagram provides an overview of the solution architecture.

Prerequisites

Before following the steps in this post, make sure you have the foundational components of a HyperPod cluster setup in place. This includes the core infrastructure for the HyperPod cluster and the network configuration required for secure access. Specifically, you need:

- HyperPod cluster – This post assumes you already have a HyperPod cluster deployed. If not, refer to Getting started with SageMaker HyperPod and the HyperPod workshop for guidance on creating and configuring your cluster.

- VPC, subnets, and security group – Your HyperPod cluster should reside within a VPC with associated subnets. To deploy a new VPC and subnets, follow the instructions in the Own Account section of the HyperPod workshop. This process includes deploying an AWS CloudFormation stack to create essential resources such as the VPC, subnets, security group, and an Amazon FSx for Lustre volume for shared storage.

Setting up login nodes for cluster access

Login nodes are dedicated access points that users can use to interact with the HyperPod cluster’s computational resources without impacting the head node. By connecting through login nodes, users can browse files, submit jobs, and compile code independently, promoting a more organized and efficient use of the cluster’s resources.

If you haven’t set up login nodes yet, refer to the Login Node section of the HyperPod Workshop, which provides detailed instructions on adding these nodes to your cluster configuration.

Each login node in a HyperPod cluster has an associated network interface within your VPC. A network interface, also known as an elastic network interface, represents a virtual network card that connects each login node to your VPC, allowing it to communicate over the network. These interfaces have assigned IPv4 addresses, which are essential for routing traffic from the NLB to the login nodes.

To proceed with the load balancer setup, you need to obtain the IPv4 addresses of each login node. You can obtain these addresses from the AWS Management Console or by invoking a command on your HyperPod cluster’s head node.

Using the AWS Management Console

To set up login nodes for cluster access using the AWS Management Console, follow these steps:

- On the Amazon EC2 console, select Network interfaces in the navigation pane

- In the Search bar, select VPC ID = (Equals) and choose the VPC id of the VPC containing the HyperPod cluster

- In the Search bar, select Description : (Contains) and enter the name of the instance group that includes your login nodes (typically, this is login-group)

For each login node, you will find an entry in the list, as shown in the following screenshot. Note down the IPv4 addresses for all login nodes of your cluster.

Using the HyperPod head node

Alternatively, you can also retrieve the IPv4 addresses by entering the following command on your HyperPod cluster’s head node:

Create a Network Load Balancer

The next step is to create a NLB to manage traffic across your cluster’s login nodes.

For the NLB deployment, you need the IPv4 addresses of the login nodes collected earlier and the appropriate security group configurations. If you deployed your cluster using the HyperPod workshop instructions, a security group that permits communication between all cluster nodes should already be in place.

This security group can be applied to the load balancer, as demonstrated in the following instructions. Alternatively, you can opt to create a dedicated security group that grants access specifically to the login nodes.

Create target group

First, we create the target group that will be used by the NLB.

- On the Amazon EC2 console, select Target groups in the navigation pane

- Choose Create target group

- Create a target group with the following parameters:

- For Choose a target type, choose IP addresses

- For Target group name, enter smhp-login-node-tg

- For Protocol : Port, choose TCP and enter 22

- For IP address type, choose IPv4

- For VPC, choose SageMaker HyperPod VPC (which was created with the CloudFormation template)

- For Health check protocol, choose TCP

- Choose Next, as shown in the following screenshot

- In the Register targets section, register the login node IP addresses as the targets

- For Ports, enter 22 and choose Include as pending below, as shown in the following screenshot

- The login node IPs will appear as targets with Pending health status. Choose Create target group, as shown in the following screenshot

Create load balancer

To create the load balancer, follow these steps:

- On the Amazon EC2 console, select Load Balancers in the navigation pane

- Choose Create load balancer

- Choose Network Load Balancer and choose Create, as shown in the following screenshot

- Provide a name (for example, smhp-login-node-lb) and choose Internal as Scheme

- For network mapping, select the VPC that contains your HyperPod cluster and an associated private subnet, as shown in the following screenshot

- Select a security group that allows access on port 22 to the login nodes. If you deployed your cluster using the HyperPod workshop instructions, you can use the security group from this deployment.

- Select the Target group that you created before and choose TCP as Protocol and 22 for Port, as shown in the following screenshot

- Choose Create load balancer

After the load balancer has been created, you can find its DNS name on the load balancer’s detail page, as shown in the following screenshot.

Making sure host keys are consistent across login nodes

When using multiple login nodes in a load-balanced environment, it’s crucial to maintain consistent SSH host keys across all nodes. SSH host keys are unique identifiers that each server uses to prove its identity to connecting clients. If each login node has a different host key, users will encounter “WARNING: SSH HOST KEY CHANGED” messages whenever they connect to a different node, causing confusion and potentially leading users to question the security of the connection.

To avoid these warnings, configure the same SSH host keys on all login nodes in the load balancing rotation. This setup makes sure that users won’t receive host key mismatch alerts when routed to a different node by the load balancer.

You can enter the following script on the cluster’s head node to copy the SSH host keys from the first login node to the other login nodes in your HyperPod cluster:

Create AWS Client VPN endpoint

Because the NLB has been created with Internal scheme, it’s only accessible from within the HyperPod VPC. To access the VPC and send requests to the NLB, we use AWS Client VPN in this post.

AWS Client VPN is a managed client-based VPN service that enables secure access to your AWS resources and resources in your on-premises network.

We’ll set up an AWS Client VPN endpoint that provides clients with access to the HyperPod VPC and uses mutual authentication. With mutual authentication, Client VPN uses certificates to perform authentication between clients and the Client VPN endpoint.

To deploy a client VPN endpoint with mutual authentication, you can follow the steps outlined in Get started with AWS Client VPN. When configuring the client VPN to access the HyperPod VPC and the login nodes, keep these adaptations to the following steps in mind:

- Step 2 (create a Client VPN endpoint) – By default, all client traffic is routed through the Client VPN tunnel. To allow internet access without routing traffic through the VPN, you can enable the option Enable split-tunnel when creating the endpoint. When this option is enabled, only traffic destined for networks matching a route in the Client VPN endpoint route table is routed through the VPN tunnel. For more details, refer to Split-tunnel on Client VPN endpoints.

- Step 3 (target network associations) – Select the VPC and private subnet used by your HyperPod cluster, which contains the cluster login nodes.

- Step 4 (authorization rules) – Choose the Classless Inter-Domain Routing (CIDR) range associated with the HyperPod VPC. If you followed the HyperPod workshop instructions, the CIDR range is 10.0.0.0/16.

- Step 6 (security groups) – Select the security group that you previously used when creating the NLB.

Connecting to the login nodes

After the AWS Client VPN is configured, clients can establish a VPN connection to the HyperPod VPC. With the VPN connection in place, clients can use SSH to connect to the NLB, which will route them to one of the login nodes.

ssh -i /path/to/your/private-key.pem user@<NLB-IP-or-DNS>

To allow SSH access to the login nodes, you must create user accounts on the cluster and add their public keys to the authorized_keys file on each login node (or on all nodes, if necessary). For detailed instructions on managing multi-user access, refer to the Multi-User section of the HyperPod workshop.

In addition to using the AWS Client VPN, you can also access the NLB from other AWS services, such as Amazon Elastic Compute Cloud (Amazon EC2) instances, if they meet the following requirements:

- VPC connectivity – The EC2 instances must be either in the same VPC as the NLB or able to access the HyperPod VPC through a peering connection or similar network setup.

- Security group configuration – The EC2 instance’s security group must allow outbound connections on port 22 to the NLB security group. Likewise, the NLB security group should be configured to accept inbound SSH traffic on port 22 from the EC2 instance’s security group.

Clean up

To remove deployed resources, you can clean them up in the following order:

- Delete the Client VPN endpoint

- Delete the Network Load Balancer

- Delete the target group associated with the load balancer

If you also want to delete the HyperPod cluster, follow these additional steps:

- Delete the HyperPod cluster

- Delete the CloudFormation stack, which includes the VPC, subnets, security group, and FSx for Lustre volume

Conclusion

In this post, we explored how to implement login node load balancing for SageMaker HyperPod clusters. By using a Network Load Balancer to distribute user traffic across login nodes, you can optimize resource utilization and enhance the overall multi-user experience, providing seamless access to cluster resources for each user.

This approach represents only one way to customize your HyperPod cluster. Because of the flexibility of SageMaker HyperPod you can adapt configurations to your unique needs while benefiting from a managed, resilient environment. Whether you need to scale foundation model workloads, share compute resources across different tasks, or support long-running training jobs, SageMaker HyperPod offers a versatile solution that can evolve with your requirements.

For more details on making the most of SageMaker HyperPod, dive into the HyperPod workshop and explore further blog posts covering HyperPod.

About the Authors