This post takes you through the most common challenges that customers face when searching internal documents, and gives you concrete guidance on how AWS services can be used to create a generative AI conversational bot that makes internal information more useful.

Unstructured data accounts for 80% of all the data found within organizations, consisting of repositories of manuals, PDFs, FAQs, emails, and other documents that grows daily. Businesses today rely on continuously growing repositories of internal information, and problems arise when the amount of unstructured data becomes unmanageable. Often, users find themselves reading and checking many different internal sources to find the answers they need.

Internal question and answer forums can help users get highly specific answers but also require longer wait times. In the case of company-specific internal FAQs, long wait times result in lower employee productivity. Question and answer forums are difficult to scale as they rely on manually written answers. With generative AI, there is currently a paradigm shift in how users search and find information. The next logical step is to use generative AI to condense large documents into smaller bite sized information for easier user consumption. Instead of spending a long time reading text or waiting for answers, users can generate summaries in real-time based on multiple existing repositories of internal information.

Solution overview

The solution allows customers to retrieve curated responses to questions asked about internal documents by using a transformer model to generate answers to questions about data that it has not been trained on, a technique known as zero-shot prompting. By adopting this solution, customers can gain the following benefits:

- Find accurate answers to questions based on existing sources of internal documents

- Reduce the time users spend searching for answers by using Large Language Models (LLMs) to provide near-immediate answers to complex queries using documents with the most updated information

- Search previously answered questions through a centralized dashboard

- Reduce stress caused by spending time manually reading information to look for answers

Retrieval Augmented Generation (RAG)

Retrieval Augmented Generation (RAG) reduces some of the shortcomings of LLM based queries by finding the answers from your knowledge base and using the LLM to summarize the documents into concise responses. Please read this post to learn how to implement the RAG approach with Amazon Kendra. The following risks and limitations are associated with LLM based queries that a RAG approach with Amazon Kendra addresses:

- Hallucinations and traceability – LLMS are trained on large data sets and generate responses on probabilities. This can lead to inaccurate answers, which are known as hallucinations.

- Multiple data silos – In order to reference data from multiple sources within your response, one needs to set up a connector ecosystem to aggregate the data. Accessing multiple repositories is manual and time-consuming.

- Security – Security and privacy are critical considerations when deploying conversational bots powered by RAG and LLMs. Despite using Amazon Comprehend to filter out personal data that may be provided through user queries, there remains a possibility of unintentionally surfacing personal or sensitive information, depending on the ingested data. This means that controlling access to the chatbot is crucial to prevent unintended access to sensitive information.

- Data relevance – LLMS are trained on data up to certain date, which means information is often not current. The cost associated with training models on recent data is high. To ensure accurate and up-to-date responses, organizations bear the responsibility of regularly updating and enriching the content of the indexed documents.

- Cost – The cost associated with deploying this solution should be a consideration for businesses. Businesses need to carefully assess their budget and performance requirements when implementing this solution. Running LLMs can require substantial computational resources, which may increase operational costs. These costs can become a limitation for applications that need to operate at a large scale. However, one of the benefits of the AWS Cloud is the flexibility to only pay for what you use. AWS offers a simple, consistent, pay-as-you-go pricing model, so you are charged only for the resources you consume.

Usage of Amazon SageMaker JumpStart

For transformer-based language models, organizations can benefit from using Amazon SageMaker JumpStart, which offers a collection of pre-built machine learning models. Amazon SageMaker JumpStart offers a wide range of text generation and question-answering (Q&A) foundational models that can be easily deployed and utilized. This solution integrates a FLAN T5-XL Amazon SageMaker JumpStart model, but there are different aspects to keep in mind when choosing a foundation model.

Integrating security in our workflow

Following the best practices of the Security Pillar of the Well-Architected Framework, Amazon Cognito is used for authentication. Amazon Cognito User Pools can be integrated with third-party identity providers that support several frameworks used for access control, including Open Authorization (OAuth), OpenID Connect (OIDC), or Security Assertion Markup Language (SAML). Identifying users and their actions allows the solution to maintain traceability. The solution also uses the Amazon Comprehend personally identifiable information (PII) detection feature to automatically identity and redact PII. Redacted PII includes addresses, social security numbers, email addresses, and other sensitive information. This design ensures that any PII provided by the user through the input query is redacted. The PII is not stored, used by Amazon Kendra, or fed to the LLM.

Solution Walkthrough

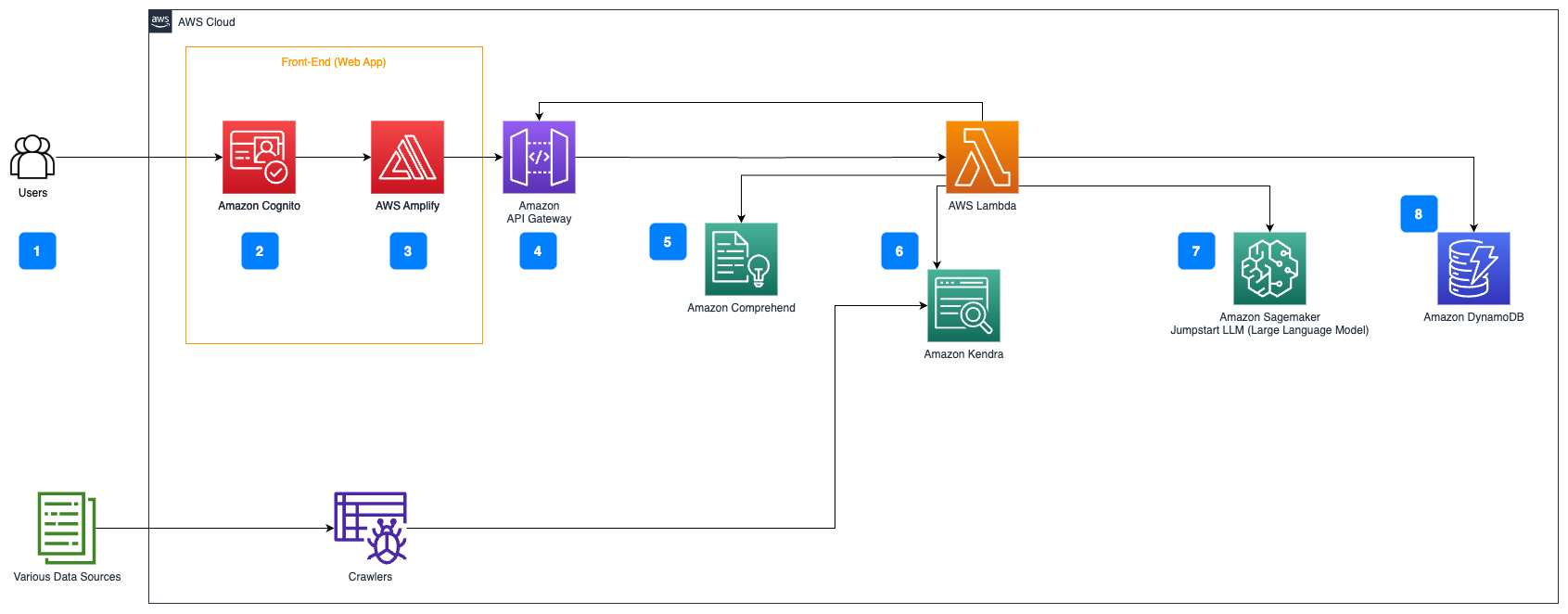

The following steps describe the workflow of the Question answering over documents flow:

- Users send a query through a web interface.

- Amazon Cognito is used for authentication, ensuring secure access to the web application.

- The web application front-end is hosted on AWS Amplify.

- Amazon API Gateway hosts a REST API with various endpoints to handle user requests that are authenticated using Amazon Cognito.

- PII redaction with Amazon Comprehend:

- User Query Processing: When a user submits a query or input, it is first passed through Amazon Comprehend. The service analyzes the text and identifies any PII entities present within the query.

- PII Extraction: Amazon Comprehend extracts the detected PII entities from the user query.

- Relevant Information Retrieval with Amazon Kendra:

- Amazon Kendra is used to manage an index of documents that contains the information used to generate answers to the user’s queries.

- The LangChain QA retrieval module is used to build a conversation chain that has relevant information about the user’s queries.

- Integration with Amazon SageMaker JumpStart:

- The AWS Lambda function uses the LangChain library and connects to the Amazon SageMaker JumpStart endpoint with a context-stuffed query. The Amazon SageMaker JumpStart endpoint serves as the interface of the LLM used for inference.

- Storing responses and returning it to the user:

- The response from the LLM is stored in Amazon DynamoDB along with the user’s query, the timestamp, a unique identifier, and other arbitrary identifiers for the item such as question category. Storing the question and answer as discrete items allows the AWS Lambda function to easily recreate a user’s conversation history based on the time when questions were asked.

- Finally, the response is sent back to the user via a HTTPs request through the Amazon API Gateway REST API integration response.

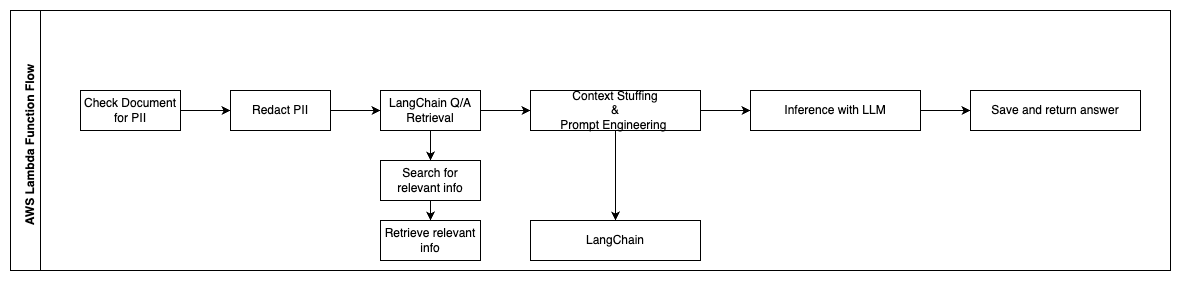

The following steps describe the AWS Lambda functions and their flow through the process:

- Check and redact any PII / Sensitive info

- LangChain QA Retrieval Chain

- Search and retrieve relevant info

- Context Stuffing & Prompt Engineering

- Inference with LLM

- Return response & Save it

Use cases

There are many business use cases where customers can use this workflow. The following section explains how the workflow can be used in different industries and verticals.

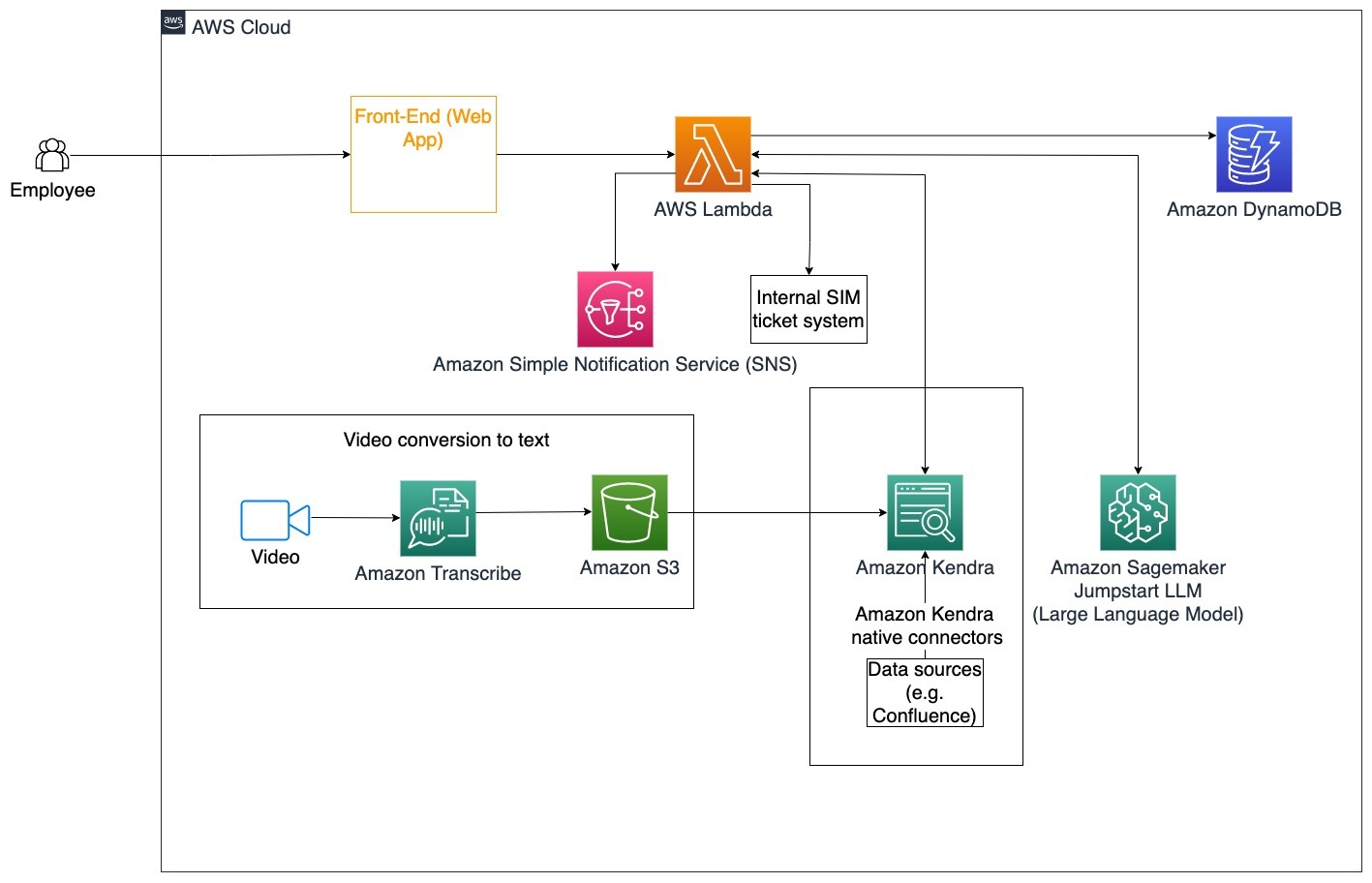

Employee Assistance

Well-designed corporate training can improve employee satisfaction and reduce the time required for onboarding new employees. As organizations grow and complexity increases, employees find it difficult to understand the many sources of internal documents. Internal documents in this context include company guidelines, policies, and Standard Operating Procedures. For this scenario, an employee has a question in how to proceed and edit an internal issue ticketing ticket. The employee can access and use the generative artificial intelligence (AI) conversational bot to ask and execute the next steps for a specific ticket.

Specific use case: Automate issue resolution for employees based on corporate guidelines.

The following steps describe the AWS Lambda functions and their flow through the process:

- LangChain agent to identify the intent

- Send notification based on employee request

- Modify ticket status

In this architecture diagram, corporate training videos can be ingested through Amazon Transcribe to collect a log of these video scripts. Additionally, corporate training content stored in various sources (i.e., Confluence, Microsoft SharePoint, Google Drive, Jira, etc.) can be used to create indexes through Amazon Kendra connectors. Read this article to learn more on the collection of native connectors you can utilize in Amazon Kendra as a source point. The Amazon Kendra crawler is then able to use both the corporate training video scripts and documentation stored in these other sources to assist the conversational bot in answering questions specific to company corporate training guidelines. The LangChain agent verifies permissions, modifies ticket status, and notifies the correct individuals using Amazon Simple Notification Service (Amazon SNS).

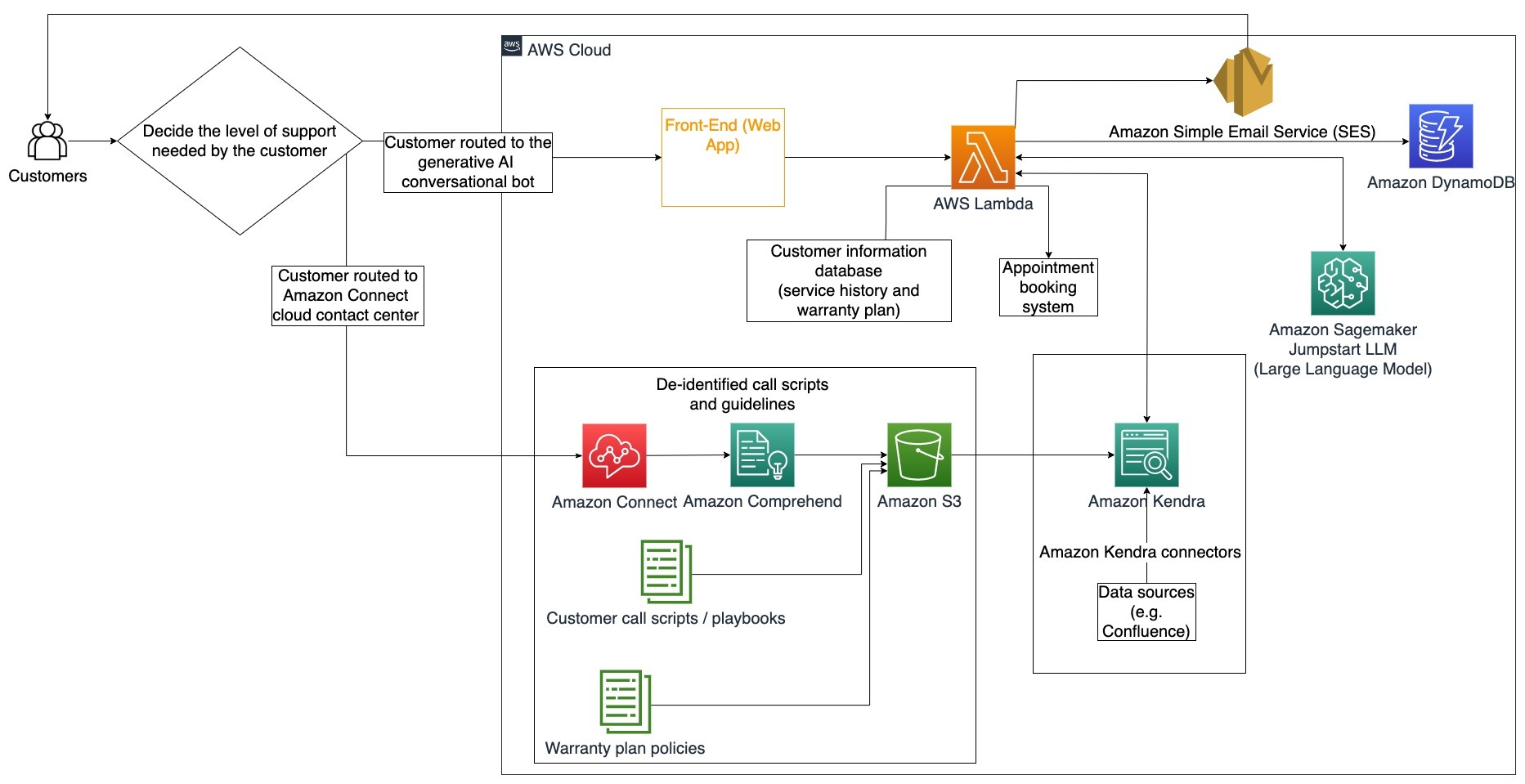

Customer Support Teams

Quickly resolving customer queries improves the customer experience and encourages brand loyalty. A loyal customer base helps drive sales, which contributes to the bottom line and increases customer engagement. Customer support teams spend lots of energy referencing many internal documents and customer relationship management software to answer customer queries about products and services. Internal documents in this context can include generic customer support call scripts, playbooks, escalation guidelines, and business information. The generative AI conversational bot helps with cost optimization because it handles queries on behalf of the customer support team.

Specific use case: Handling an oil change request based on service history and customer service plan purchased.

In this architecture diagram, the customer is routed to either the generative AI conversational bot or the Amazon Connect contact center. This decision can be based on the level of support needed or the availability of customer support agents. The LangChain agent identifies the customer’s intent and verifies identity. The LangChain agent also checks the service history and purchased support plan.

The following steps describe the AWS Lambda functions and their flow through the process:

- LangChain agent identifies the intent

- Retrieve Customer Information

- Check customer service history and warranty information

- Book appointment, provide more information, or route to contact center

- Send email confirmation

Amazon Connect is used to collect the voice and chat logs, and Amazon Comprehend is used to remove personally identifiable information (PII) from these logs. The Amazon Kendra crawler is then able to use the redacted voice and chat logs, customer call scripts, and customer service support plan policies to create the index. Once a decision is made, the generative AI conversational bot decides whether to book an appointment, provide more information, or route the customer to the contact center for further assistance. For cost optimization, the LangChain agent can also generate answers using fewer tokens and a less expensive large language model for lower priority customer queries.

Financial Services

Financial services companies rely on timely use of information to stay competitive and comply with financial regulations. Using a generative AI conversational bot, financial analysts and advisors can interact with textual information in a conversational manner and reduce the time and effort it takes to make better informed decisions. Outside of investment and market research, a generative AI conversational bot can also augment human capabilities by handling tasks that would traditionally require more human effort and time. For example, a financial institution specializing in personal loans can increase the rate at which loans are processed while providing better transparency to customers.

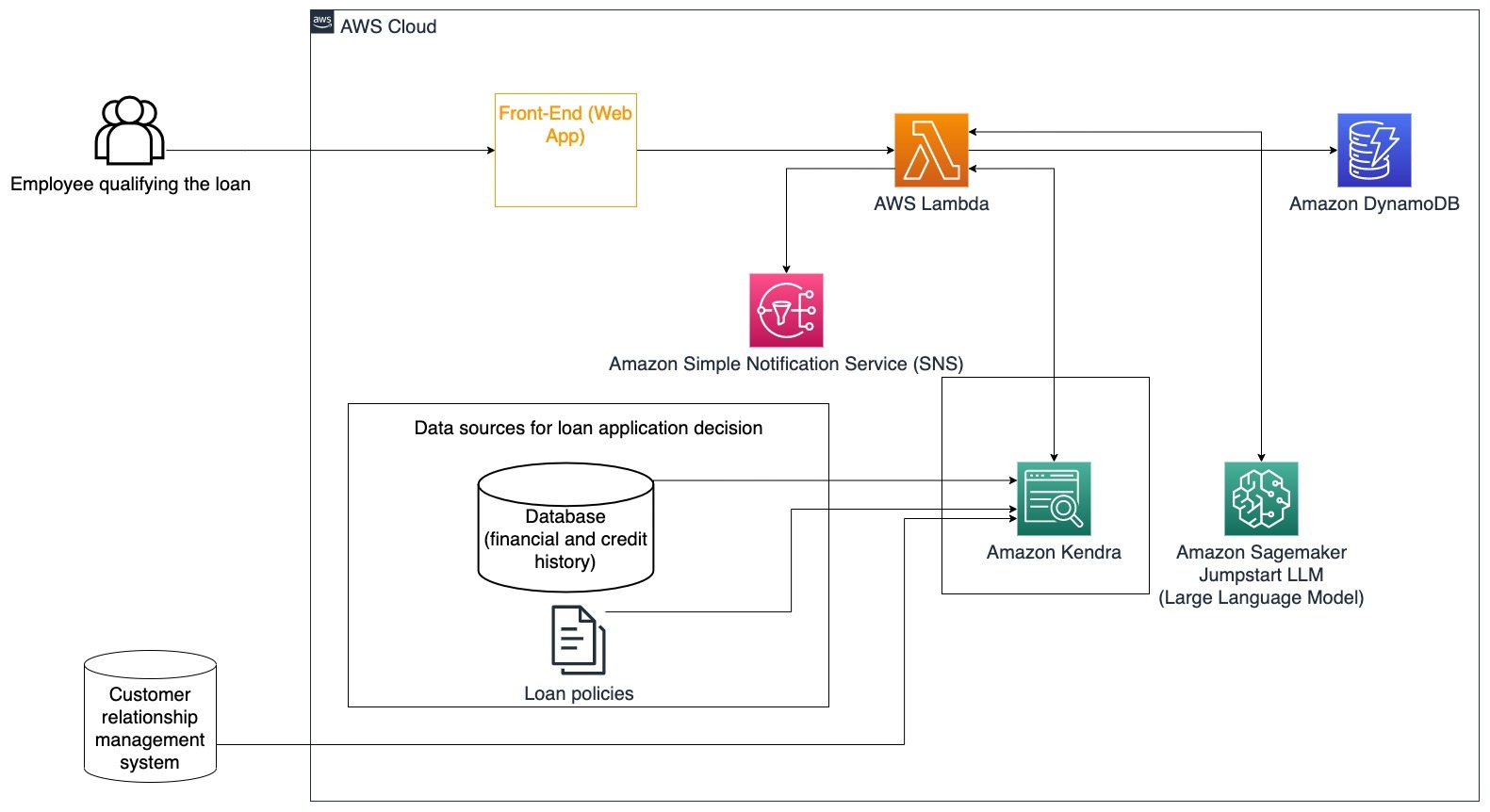

Specific use case: Use customer financial history and previous loan applications to decide and explain loan decision.

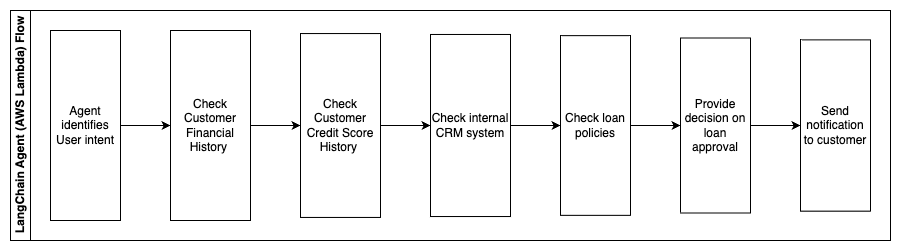

The following steps describe the AWS Lambda functions and their flow through the process:

- LangChain agent to identify the intent

- Check customer financial and credit score history

- Check internal customer relationship management system

- Check standard loan policies and suggest decision for employee qualifying the loan

- Send notification to customer

This architecture incorporates customer financial data stored in a database and data stored in a customer relationship management (CRM) tool. These data points are used to inform a decision based on the company’s internal loan policies. The customer is able to ask clarifying questions to understand what loans they qualify for and the terms of the loans they can accept. If the generative AI conversational bot is unable to approve a loan application, the user can still ask questions about improving credit scores or alternative financing options.

Government

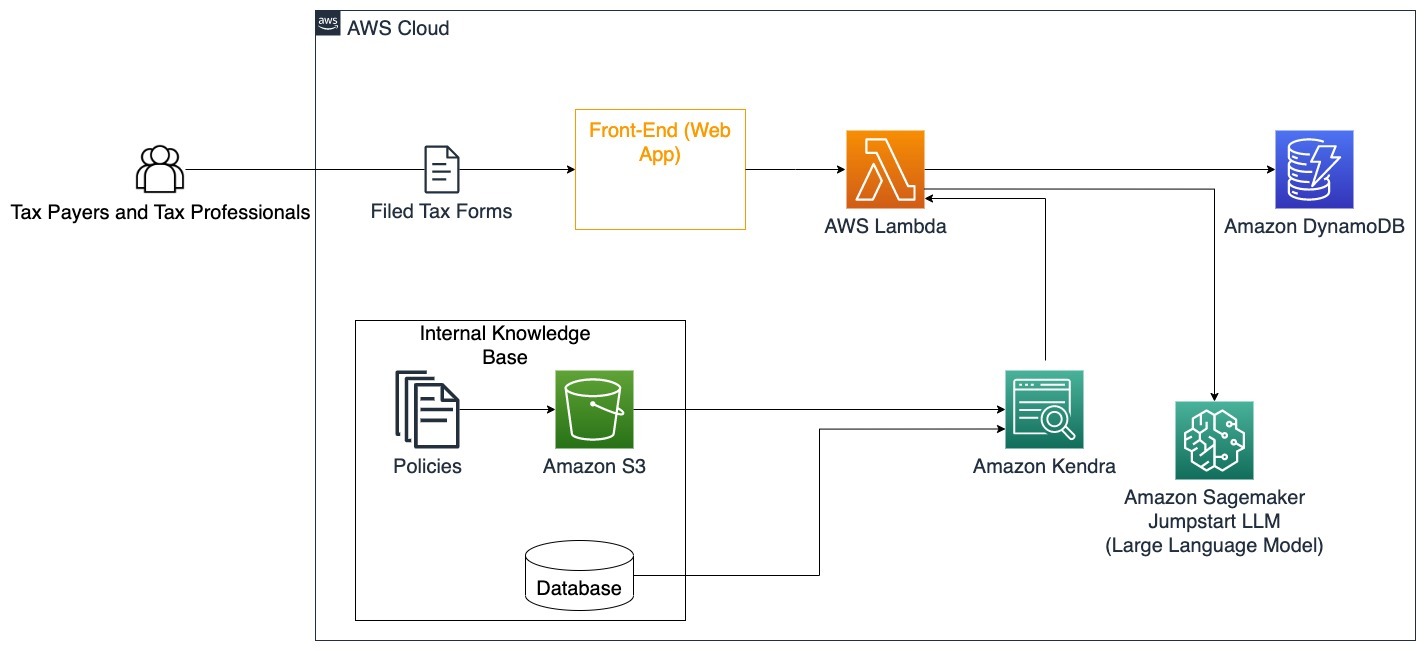

Generative AI conversational bots can greatly benefit government institutions by speeding up communication, efficiency, and decision-making processes. Generative AI conversational bots can also provide instant access to internal knowledge bases to help government employees to quickly retrieve information, policies, and procedures (i.e., eligibility criteria, application processes, and citizen’s services and support). One solution is an interactive system, which allows tax payers and tax professionals to easily find tax-related details and benefits. It can be used to understand user questions, summarize tax documents, and provide clear answers through interactive conversations.

Users can ask questions such as:

- How does inheritance tax work and what are the tax thresholds?

- Can you explain the concept of income tax?

- What are the tax implications when selling a second property?

Additionally, users can have the convenience of submitting tax forms to a system, which can help verify the correctness of the information provided.

This architecture illustrates how users can upload completed tax forms to the solution and utilize it for interactive verification and guidance on how to accurately completing the necessary information.

Healthcare

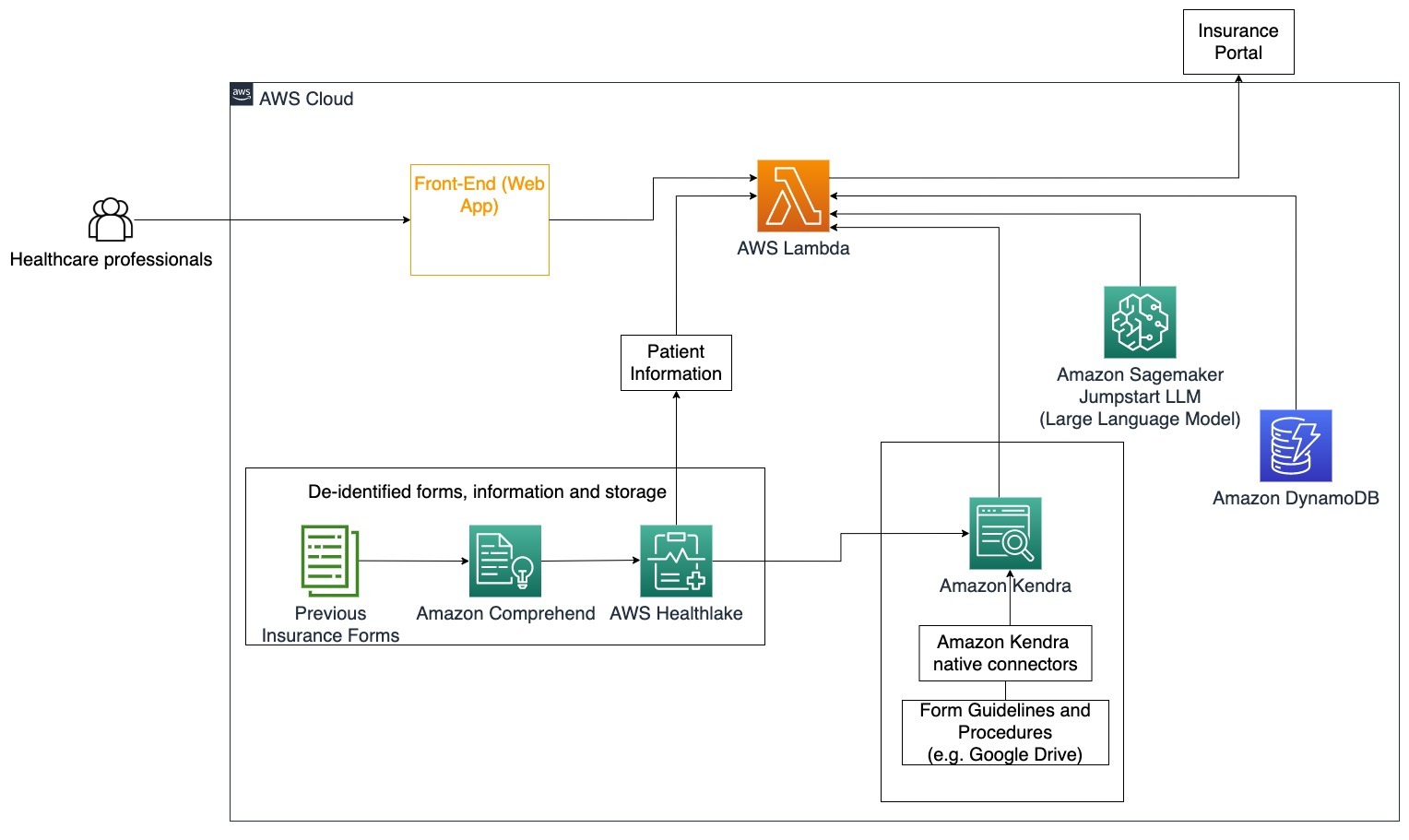

Healthcare businesses have the opportunity to automate the use of large amounts of internal patient information, while also addressing common questions regarding use cases such as treatment options, insurance claims, clinical trials, and pharmaceutical research. Using a generative AI conversational bot enables quick and accurate generation of answers about health information from the provided knowledge base. For example, some healthcare professionals spend a lot of time filling in forms to file insurance claims.

In similar settings, clinical trial administrators and researchers need to find information about treatment options. A generative AI conversational bot can use the pre-built connectors in Amazon Kendra to retrieve the most relevant information from the millions of documents published through ongoing research conducted by pharmaceutical companies and universities.

Specific use case: Reduce the errors and time needed to fill out and send insurance forms.

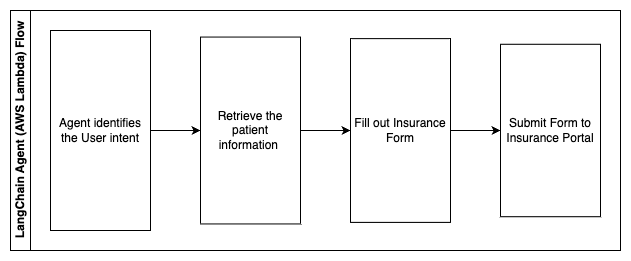

In this architecture diagram, a healthcare professional is able to use the generative AI conversational bot to figure out what forms need to be filled out for the insurance. The LangChain agent is then able to retrieve the right forms and add the needed information for a patient as well as giving responses for descriptive parts of the forms based on insurance policies and previous forms. The healthcare professional can edit the responses given by the LLM before approving and having the form delivered to the insurance portal.

The following steps describe the AWS Lambda functions and their flow through the process:

- LangChain agent to identify the intent

- Retrieve the patient information needed

- Fill out the insurance form based on the patient information and form guideline

- Submit the form to the insurance portal after user approval

AWS HealthLake is used to securely store the health data including previous insurance forms and patient information, and Amazon Comprehend is used to remove personally identifiable information (PII) from the previous insurance forms. The Amazon Kendra crawler is then able to use the set of insurance forms and guidelines to create the index. Once the form(s) are filled out by the generative AI, then the form(s) reviewed by the medical professional can be sent to the insurance portal.

Cost estimate

The cost of deploying the base solution as a proof-of-concept is shown in the following table. Since the base solution is considered a proof-of-concept, Amazon Kendra Developer Edition was used as a low-cost option since the workload would not be in production. Our assumption for Amazon Kendra Developer Edition was 730 active hours for the month.

For Amazon SageMaker, we made an assumption that the customer would be using the ml.g4dn.2xlarge instance for real-time inference, with a single inference endpoint per instance. You can find more information on Amazon SageMaker pricing and available inference instance types here.

| Service | Resources Consumed | Cost Estimate Per Month in USD |

| AWS Amplify | 150 build minutes 1 GB of Data served 500,000 requests |

15.71 |

| Amazon API Gateway | 1M REST API Calls | 3.5 |

| AWS Lambda | 1 Million requests 5 seconds duration per request 2 GB memory allocated |

160.23 |

| Amazon DynamoDB | 1 million reads 1 million writes 100 GB storage |

26.38 |

| Amazon Sagemaker | Real-time inference with ml.g4dn.2xlarge | 676.8 |

| Amazon Kendra | Developer Edition with 730 hours/month 10,000 Documents scanned 5,000 queries/day |

821.25 |

| . | . | Total Cost: 1703.87 |

* Amazon Cognito has a free tier of 50,000 Monthly Active Users who use Cognito User Pools or 50 Monthly Active Users who use SAML 2.0 identity providers

Clean Up

To save costs, delete all the resources you deployed as part of the tutorial. You can delete any SageMaker endpoints you may have created via the SageMaker console. Remember, deleting an Amazon Kendra index doesn’t remove the original documents from your storage.

Conclusion

In this post, we showed you how to simplify access to internal information by summarizing from multiple repositories in real-time. After the recent developments of commercially available LLMs, the possibilities of generative AI have become more apparent. In this post, we showcased ways to use AWS services to create a serverless chatbot that uses generative AI to answer questions. This approach incorporates an authentication layer and Amazon Comprehend’s PII detection to filter out any sensitive information provided in the user’s query. Whether it be individuals in healthcare understanding the nuances to file insurance claims or HR understanding specific company-wide regulations, there’re multiple industries and verticals that can benefit from this approach. An Amazon SageMaker JumpStart foundation model is the engine behind the chatbot, while a context stuffing approach using the RAG technique is used to ensure that the responses more accurately reference internal documents.

To learn more about working with generative AI on AWS, refer to Announcing New Tools for Building with Generative AI on AWS. For more in-depth guidance on using the RAG technique with AWS services, refer to Quickly build high-accuracy Generative AI applications on enterprise data using Amazon Kendra, LangChain, and large language models. Since the approach in this blog is LLM agnostic, any LLM can be used for inference. In our next post, we’ll outline ways to implement this solution using Amazon Bedrock and the Amazon Titan LLM.

About the Authors

Abhishek Maligehalli Shivalingaiah is a Senior AI Services Solution Architect at AWS. He is passionate about building applications using Generative AI, Amazon Kendra and NLP. He has around 10 years of experience in building Data & AI solutions to create value for customers and enterprises. He has even built a (personal) chatbot for fun to answers questions about his career and professional journey. Outside of work he enjoys making portraits of family & friends, and loves creating artworks.

Abhishek Maligehalli Shivalingaiah is a Senior AI Services Solution Architect at AWS. He is passionate about building applications using Generative AI, Amazon Kendra and NLP. He has around 10 years of experience in building Data & AI solutions to create value for customers and enterprises. He has even built a (personal) chatbot for fun to answers questions about his career and professional journey. Outside of work he enjoys making portraits of family & friends, and loves creating artworks.

Medha Aiyah is an Associate Solutions Architect at AWS, based in Austin, Texas. She recently graduated from the University of Texas at Dallas in December 2022 with her Masters of Science in Computer Science with a specialization in Intelligent Systems focusing on AI/ML. She is interested to learn more about AI/ML and utilizing AWS services to discover solutions customers can benefit from.

Medha Aiyah is an Associate Solutions Architect at AWS, based in Austin, Texas. She recently graduated from the University of Texas at Dallas in December 2022 with her Masters of Science in Computer Science with a specialization in Intelligent Systems focusing on AI/ML. She is interested to learn more about AI/ML and utilizing AWS services to discover solutions customers can benefit from.

Hugo Tse is an Associate Solutions Architect at AWS based in Seattle, Washington. He holds a Master’s degree in Information Technology from Arizona State University and a bachelor’s degree in Economics from the University of Chicago. He is a member of the Information Systems Audit and Control Association (ISACA) and International Information System Security Certification Consortium (ISC)2. He enjoys helping customers benefit from technology.

Hugo Tse is an Associate Solutions Architect at AWS based in Seattle, Washington. He holds a Master’s degree in Information Technology from Arizona State University and a bachelor’s degree in Economics from the University of Chicago. He is a member of the Information Systems Audit and Control Association (ISACA) and International Information System Security Certification Consortium (ISC)2. He enjoys helping customers benefit from technology.

Ayman Ishimwe is an Associate Solutions Architect at AWS based in Seattle, Washington. He holds a Master’s degree in Software Engineering and IT from Oakland University. He has a prior experience in software development, specifically in building microservices for distributed web applications. He is passionate about helping customers build robust and scalable solutions on AWS cloud services following best practices.

Ayman Ishimwe is an Associate Solutions Architect at AWS based in Seattle, Washington. He holds a Master’s degree in Software Engineering and IT from Oakland University. He has a prior experience in software development, specifically in building microservices for distributed web applications. He is passionate about helping customers build robust and scalable solutions on AWS cloud services following best practices.

Shervin Suresh is an Associate Solutions Architect at AWS based in Austin, Texas. He has graduated with a Masters in Software Engineering with a Concentration in Cloud Computing and Virtualization and a Bachelors in Computer Engineering from San Jose State University. He is passionate about leveraging technology to help improve the lives of people from all backgrounds.

Shervin Suresh is an Associate Solutions Architect at AWS based in Austin, Texas. He has graduated with a Masters in Software Engineering with a Concentration in Cloud Computing and Virtualization and a Bachelors in Computer Engineering from San Jose State University. He is passionate about leveraging technology to help improve the lives of people from all backgrounds.